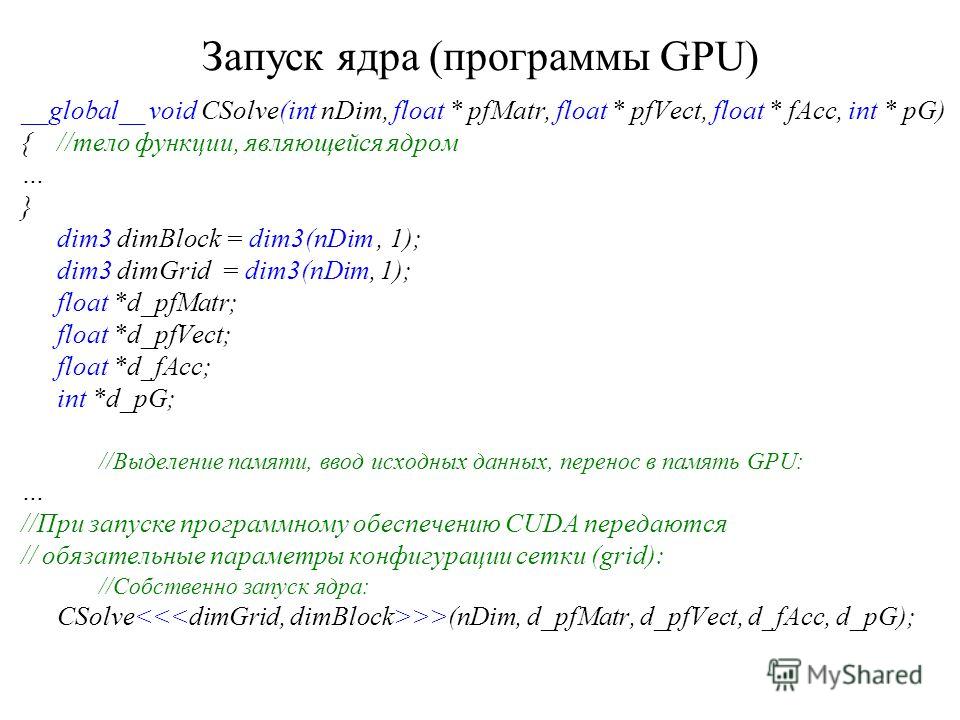

right?Ä«ut why such a big 2D grid? I would have 256*4096 = 1,048,576 threads with that grid. Is that right?Äim3 dimGrid( N/dimBlock.x, N/dimBlock.y ) // Means to me: N/dimBlock.x = 1024/16 = 64 and N/dimBlock.y = 64 -> 64*64 = 4096 Blocks per grid. I do understand everything but not the give block and grid parameters.Äim3 dimBlock( blocksize, blocksize ) // Means to me: 16*16 = 256 Threads per block. Int j = blockIdx.y * blockDim.y + threadIdx.y ĬudaMemcpy( c, cd, size, cudaMemcpyDeviceToHost ) ĬudaFree( ad ) cudaFree( bd ) cudaFree( cd ) Int i = blockIdx.x * blockDim.x + threadIdx.x _global_ void add_matrix( float* a, float *b, float *c, int N ) * requesting too many threads or blocks.I am new to CUDA C GPGPU programming and found an example in the following pdf-file: Kernel function must specify the number of threads for each call (dim3).  * per block than the device supports will trigger this error, as will * never be satisfied by the current device. * This indicates that a kernel launch is requesting resources that can = Host Frame:/lib/x86_64-linux-gnu/libc.so.6 (_libc_start_main + 0xed) = Host Frame:/opt/cuda/lib64/libcudart.so.5.0 (cudaLaunch + 0x242) The first and second arguments need to be swapped in the following calls: cudaMemcpy (gpufoundindex, cpufoundindex, foundSize, cudaMemcpyDeviceToHost) cudaMemcpy (gpumemoryblock, cpumemoryblock, memSize. = Host Frame:/usr/lib/nvidia-current-updates/libcuda.so You are copying from the device to the host, and the destination pointer is the first argument in a cudaMemcpy () call. = Saved host backtrace up to driver entry point at error = Program hit error 9 on CUDA API call to cudaLaunch With 33x33 block on CC 2.0 or 32x32 block on CC 1.1: cuda-memcheck. With 32x32 block on CC 2.0 or 16x16 on CC 1.1: cuda-memcheck. Nvcc prog.cu -g -G -lineinfo -gencode arch=compute_11,code=sm_11 -o prog It can also be used in any user code for holding values of 3 dimensions. Its most common application is to pass the grid and block dimensions in a kernel invocation. _cuda_safe_call (cudaError err, const char *filename, const int line_number)ĬreateDistanceTable (float *d_distances, float *d_coordinates)ĬUDA_SAFE_CALL (cudaFree (d_coordinates)) Ĭompilation command (change architecture accordingly): dim3 is an integer vector type that can be used in CUDA code. _cuda_check_errors (const char *filename, const int line_number)ĬudaError err = cudaDeviceSynchronize () Ä®rr, filename, line_number, cudaGetErrorString (err))  #define CUDA_SAFE_CALL(err) _cuda_safe_call(err, _FILE_, _LINE_) #define CUDA_CHECK_ERROR() _cuda_check_errors(_FILE_, _LINE_) #define BLOCK_SIZE 16 // set to 16 instead of 32 for instance cudaMemcpyHostToDevice)) // Run kernel dim3 dimBlock(16, 16) dim3 dimGrid(divup(n, dimBlock.x), divup(n, dimBlock.y)) mykernel<<would greatly appreciate any guidance in helping my understand how I should approach the dimBlock and dimGrid for my kernel call on my laptop. My laptop's GPU is a GeForce 9600M GT ( Compute Capability 1.1). My issue is I simply have not found a way to get this code to run properly on my laptop. Int row = blockIdx.y * blockDim.y + threadIdx.y Int col = blockIdx.x * blockDim.x + threadIdx.x _global_ void createDistanceTable(double *d_distances, double *d_coordinates) Here is part of the code below: #define SIZE 988 My gpu is populating the distance array of size 988*988. I can get my code to run properly on this PC. On my home PC, I have a GeForce GTX 580 ( Compute Capability 2.0). I have researched the best way to determine dimGrid and dimBlock in my GPU kernel call and for some reason I'm not quite getting it to work. Each block in the grid is also one dimensional and has 32 threads. First off, I'm fairly new to CUDA programming so I apologize for such a simple question. dim3 dimBlock(32,1,1) F<<(x,y) launches a grid of 128 blocks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed